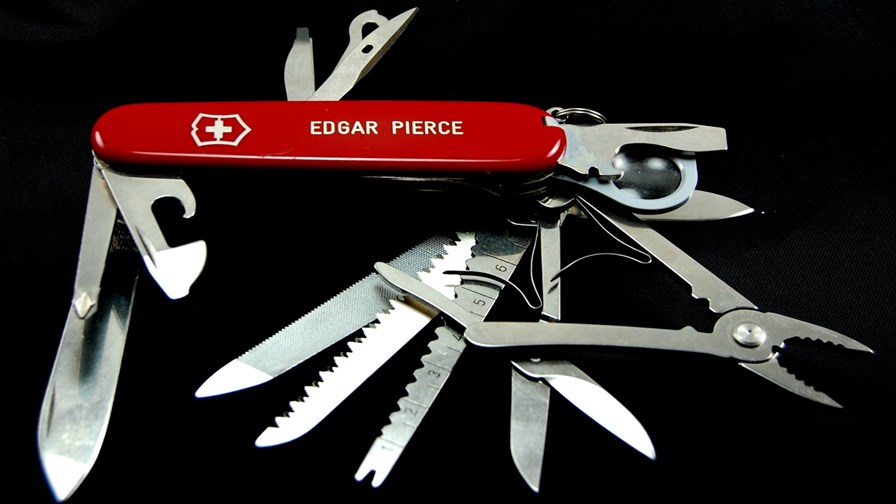

via Flickr © edgarpierce (CC BY 2.0)

- FPGAs are a mix of logic, memory and DSP blocks offering high throughput in real time

- FPGAs are winning out in the market because of their flexibility

- And are a great (and low powered) assist for big data crunching at the edge and in the core

They’ve been called the ‘Swiss army knife of semiconductors’ (well they have now) at least by Dan McNamara, general manager of the Programmable Solutions Group at Intel, who outlines below how Intel’s field programmable gate arrays (FPGAs) are gaining momentum in the marketplace because of their flexibility.

We’ve also heard that FPGAs might play an important role in CSP virtualisation efforts, so an alternative to FPGA (the acronym) might be (just a suggestion) ‘White Chip’ - same role in a way as the ‘white box’ in virtualisation. Both are designed to be updated with software as required so that they can be programmed to flexibly take on dynamically assigned workloads.

FPGAs are most at home at the moment ‘parallelizing’ heavy workloads in datacenters and at the edge of the network where they are often set up to handle algorithms to process massive amounts of real-time data at the edge - the sort of application that is expected to be required to thin out IoT traffic before it starts congesting the core.

One of the problems with so-called ‘white box’ virtualization, especially when the boxes are deployed away from the core and nearing the edges of the network they serve, is that they often can’t economically take on specialised functions without having extra bits wired into them, which rather works against the whole white box rationale. So in these situations, CSPs tend to revert to, or maintain the use of, proprietary boxes with embedded logic. It makes sense that over time, the use white chip FPGAs in out-of-data-centre locations might increase to cope with this problem.

Press Release: Intel FPGAs: accelerating the future

May 15, 2018

Field Programmable Gate Arrays Accelerate Applications from the Cloud to the Edge

By Dan McNamara

Intel® field programmable gate arrays (FPGAs) continue to gain momentum in the marketplace. Paired with Intel® processors, FPGAs are uniquely positioned to accelerate growth across a range of use cases from the cloud to the edge, unlocking the power of data to transform our world.

FPGAs are the Swiss army knife of semiconductors because of their flexibility. These devices can be programmed anytime – even after equipment has been shipped to customers. FPGAs contain a mixture of logic, memory and digital signal processing blocks that can implement any desired function, with extremely high throughput, and in real time. This makes FPGAs ideal for many critical cloud and edge applications.

The Internet of Things (IoT) is projected to reach up to 50 billion smart devices in 2020. That is about six smart devices for every human on Earth. Each person will generate about 1.5 GB of data daily, while each smart connected machine will generate as much as 50 GB daily. Extracting business intelligence by storing, processing and analyzing this massive amount of data in real time and in a power-efficient manner is a benefit that FPGAs bring to cloud and edge computing.

The recent announcement that Intel FPGAs are bringing power to artificial intelligence in Microsoft Azure* is a perfect example. The foundation is Project Brainwave, Microsoft’s principal architecture for serving real-time artificial intelligence (AI) that is used in Bing’s intelligent search, and now offered in Azure and at the edge.

Whether in the cloud or at the edge, Intel FPGAs offer a low-latency and power-efficient path to realize real-time AI without the need for batching calculations into smaller processing elements. For example, FPGA-powered AI is able to achieve extremely high throughput that can run ResNet-50, an industry-standard deep neural network requiring almost 8 billion calculations without batching. This is achievable in FPGAs because the programmable hardware, including logic, DSP, and embedded memory, allows any desired logic function to be easily programmed and optimized for area, performance or power. Since this fabric is implemented in hardware, it can be customized and can perform parallel processing, which makes it possible to achieve orders of magnitudes of performance improvements over traditional software or GPU design methodologies.

Enterprise applications are also leveraging this same capability. Dell EMC* and Fujitsu* are putting the Intel Arria® 10 GX Programmable Acceleration Cards (PAC) into off-the-shelf servers for enterprise data centers. These accelerator cards are designed to work with Intel Xeon® processors across workloads, such as real-time data analytics, AI, video transcoding, financial, cybersecurity and genomics. These are data-intensive workloads facing an explosion of data and benefitting from the real-time and parallel processing offered by FPGAs. Intel has fostered an expansive partner ecosystem to develop full turnkey solutions across these workloads using the Acceleration Stack for Intel Xeon CPU with FPGAs.

Levyx* – a big data company led by former financial services industry executives – uses the Intel PAC based on Arria 10 FPGAs to accelerate financial backtesting, a commonly used approach to help predict the performance of computational trading strategies for financial instruments, including a full range of securities, options and derivatives. It’s a highly parallel, data- and compute-intensive workload that can often take many hours, or even days, to execute. Levyx was able to obtain 850 percent faster performance on financial backtesting using FPGAs. The attached graphic shows data across 50 algorithm simulations on 20 stock trading symbols. The results are compelling.

Figure 1. The Levyx and Intel solution accelerates backtesting workloads

In the cloud, we are seeing FPGA adoption at an unprecedented scale as enterprises tackle big data. At the edge there is a similar paradigm shift. According to research reports, the majority of data from those 50 billion smart connected devices in 2020 will be generated by machines, not humans. Data will come from a wide sector of industries, including manufacturing, robotics, healthcare and retail.

Dahua*, a leading solution provider in the global video surveillance industry, and Canada’s National Research Council (NRC) are embedding Intel FPGAs in their edge applications.

Dahua has partnered with Intel to accelerate its Deep Sense series of servers using FPGA’s for real-time inference at the edge for facial comparisons across a database of 100,000 images. Needing to rapidly perform facial recognition and operating in a bandwidth- and power-constrained environment, FPGA technology serves as a platform for low-latency and power-efficient edge inferencing.

Canada’s NRC is helping to build the next-generation Square Kilometre Array (SKA) radio telescope to be deployed in remote regions of South Africa and Australia, where viewing conditions are most ideal for astronomical research. The SKA radio telescope will be the world’s largest radio telescope that is 10,000 times faster with image resolution 50 times greater than the best radio telescope we have today. This increased resolution and speed results in an enormous amount of image data that is generated by these telescopes, processing the equivalent of a year’s data on the internet every few months.

NRC’s design embeds Intel® Stratix® 10 SX FPGAs at the Central Processing Facility located at the SKA telescope site in South Africa to perform real-time processing and analysis of collected data at the edge. High-speed analog transceivers allow signal data to be ingested in real time into the core FPGA fabric. After that, the programmable logic can be parallelized to execute any custom algorithm optimized for power efficiency, performance or both, making FPGAs the ideal choice for processing massive amounts of real-time data at the edge.

From cloud computing, to edge, IoT and our traditional embedded markets, Intel is at the forefront of technology. While others are predicting the future, we’re busy creating it. And in PSG, we’re accelerating it.

I look forward to sharing how Intel FPGAs are driving innovation in 5G wireless, wireline, chiplet technologies and more—unlocking the power of data to transform our world.

Daniel (Dan) McNamara is corporate vice president and general manager of the Programmable Solutions Group (PSG) at Intel Corporation.

Email Newsletters

Sign up to receive TelecomTV's top news and videos, plus exclusive subscriber-only content direct to your inbox.